ML4EO 2025 and why we're back

Last year we supported ML4EO, the Machine Learning for Earth Observation conference in Exeter. Many different researchers and practitioners showing what's now possible with satellite data, foundation models, and geospatial AI across conservation monitoring, carbon markets, and environmental integrity. We're sponsoring again this year because we think this community matters.

What machine learning for earth observation gets wrong

The advances in geospatial AI are exciting, and this is why we are supporting the conference. Foundation models trained on petabytes of satellite imagery. Embeddings that capture landscape similarity without hand-crafted features. New ways to monitor forests, map habitats, and track land use change at global scale. It's what we work on every day.

But one of the talks that stuck with us most from ML4EO 2025 wasn't about a new model or a new dataset. It was about caution. Karen Anderson and Bri Pickstone from Exeter presented "Why ML != EO: From Black-Box to the Accuracy Paradox", arguing that the remote sensing community is adopting machine learning faster than it's thinking about what these tools actually represent. We agree.

Remote sensing scientists work with composites every day. Mosaics stitched from images captured at different times, from different viewpoints. Few people stop to question them. In an age of machine learning for earth observation, this is getting more pronounced. Models built on composites that have not been questioned. Pixels treated as more accurate than ground truth when the same tree can look completely different one day apart because of sun angle. Pixels are useful abstractions, but they are abstractions.

Why this matters for carbon and biodiversity conservation

This is where it connects to what we do. If you can't be certain what a pixel represents, what does that mean for carbon credit baselines? For conservation monitoring? For any claim that says "this forest is intact" or "deforestation happened here"?

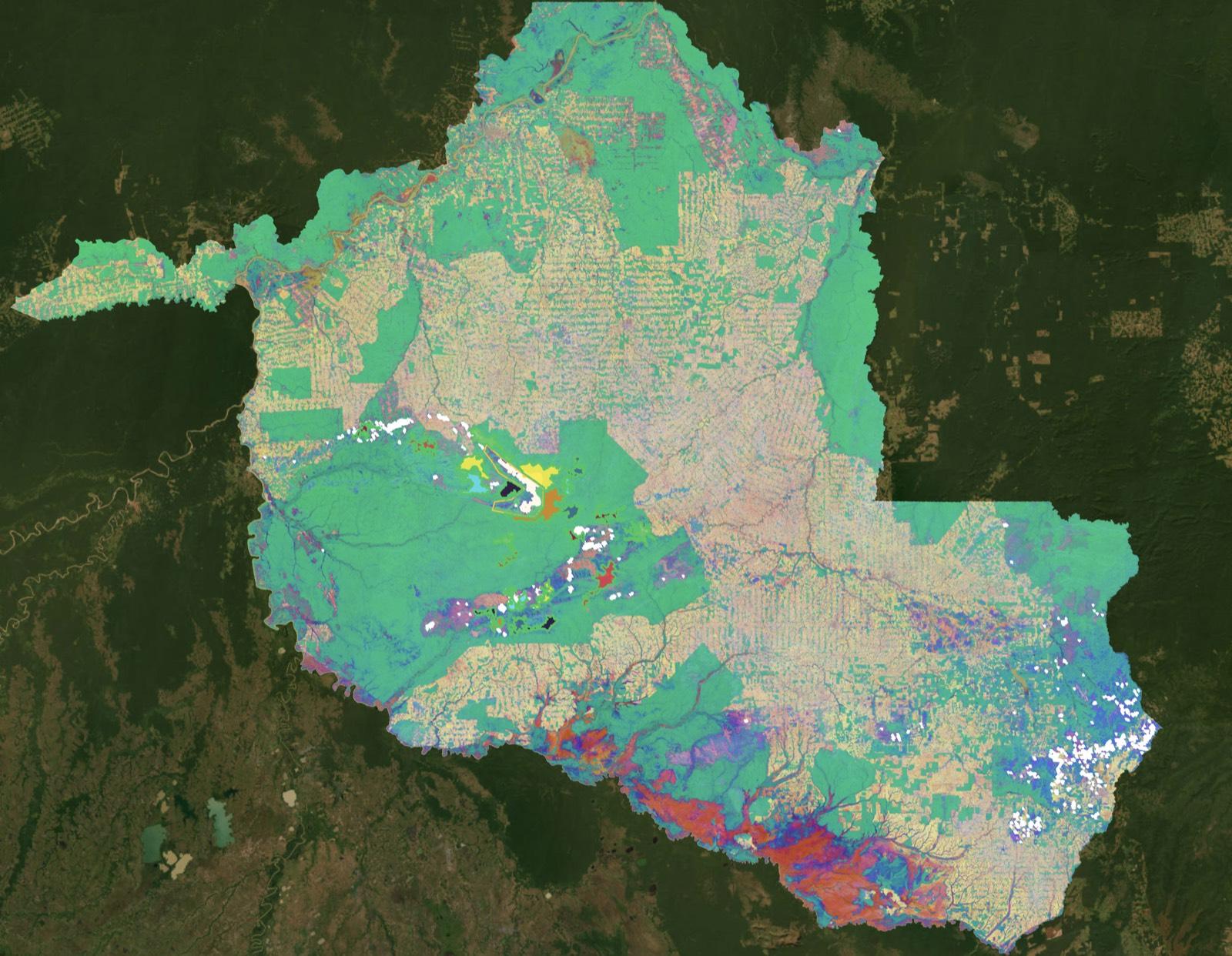

Deforestation doesn't occur on a pixel. It occurs across landscapes, at different scales, driven by processes that don't respect grid boundaries. The question isn't "what class is this pixel?" It's "what would have happened here without intervention?" That's a fundamentally different question, and it needs different tools. At belian.earth we work with embeddings rather than pixel classifications. We compare trajectories across larger scales rather than labelling individual pixels. We do this not because it's fashionable, but because the pixel-level assumptions don't hold up when the stakes are real.

Consider the accuracy paradox. A land cover map with 92% overall accuracy sounds good. But at ML4EO, Bri showed that heathland was only detected 34.8% of the time, misclassified as grassland. High overall accuracy hiding a catastrophic failure for the habitat class that actually mattered. As Karen highlighted, "finer resolution data does not always equal better quality information." When decisions about carbon credits or conservation outcomes depend on these classifications, that gap matters.

Come find us at ML4EO 2026

We will be at ML4EO 2026, where we're presenting "Better Baselines for Carbon Markets: Using Earth Observation Foundation Models to Build Credible Counterfactuals."

This year's keynotes include Dr Emily Lines (Cambridge), who is developing new methods for monitoring forest structure and biodiversity using remote sensing from the ground to satellites, and Tomislav Hengl (OpenGeoHub) on open geospatial science, which we are big supporters of. It's a strong programme.

The workshops extend the conversation. Jakub Nowosad is running a session on where your models can be trusted, using spatial cross-validation to test whether predictions hold outside training areas. It's exactly the kind of rigour the field needs more of. IBM are back with hands-on sessions on TerraTorch and foundation model fine-tuning. And there are rumours of an introduction to TESSERA, the Cambridge geospatial foundation model, from the lead developer. For anyone working with earth observation embeddings, these are worth the trip alone.

If you're working in conservation monitoring, carbon markets, or environmental integrity and want to talk about what credible counterfactual baselines look like, come find us.

Stay in the loop

Stay up to date with developments in independent reference area selection and carbon market baselining.

Related posts

VM0047's brave call is your ARR project's defining moment

VM0047 locks the developer's matched reference areas at validation and keeps them fixed for the full crediting period. That single choice defines your ARR project's baseline for decades.

The carbon baseline problem nobody wants to talk about

The carbon market has an integrity problem. But while the industry obsesses over which biomass map to trust, the real uncertainty is in the carbon baseline.

belian.earth receives ESA funding to advance forest carbon baseline methods

belian.earth has been awarded funding from the European Space Agency (ESA) for our project 'Conservation Integrity: Geo-AI powered transparency for Nature-Based Solutions.'